.jpg)

How AI advances became an ethical issue

Advances in deep fake technology are enabling the creation of hyper-realistic digital effects. Disney’s use of this technology alongside VFX for young Luke Skywalker and the recent news about the movie Fall changing “more than 30 F-bombs” in post-production using deep fake-style tech point to its growing acceptance within Hollywood. VFX and deep-fakery might seem like two sides of the same coin: both are painstaking processes designed to sculpt digital material for convincing special effects. However, the ramifications of the growing sophistication of deep fake technology are far-reaching.

We lack a proper conceptual grasp of the implications of deep fake technology and how it will impact our digital lives. It's an area where ethics are still playing catch up to developments and it comes with some thorny issues.

Problem one: Actor consent

Firstly, there’s a huge question of consent. Traditionally, an actor would know exactly how their voice and likeness would be used. Now, when existing footage or voice recordings are being used to create entirely new material, it isn’t clear where actor rights end. (How) is an actor remunerated for the digital material created? Who owns the image or voice? And how can individuals consent to distribution if they don’t know how they will be depicted or what they will be saying?

To edit the film and gain a PG-13 rating, Fall's production team used the “TrueSync AI-based system” Flawless to change 'F-bombs' to 'freakings' and alter “the mouth movements of the actors" (Variety). The process worked so well that actress Caroline Currey couldn't tell what she had said and what had been manipulated in post-production. “As far as I know, every movement my mouth made in that movie, my mouth made,”” she revealed to Variety. Yet while a success story for this particular project, confusion over what is human and what is AI puts actors in a vulnerable position.

In the case of Fall, the actors were informed of the process, consented to the edits, and digital enhancements took place over a finite period in post-production before the finalized version was screened. The modifications were superficial and served a clear, discrete purpose.

However, this isn’t always the case. AI was used to imitate Anthony Bourdain's voice last year despite him dying in 2018 - a move that produced mixed reactions. Would the actors of Fall remain enthusiastic if the scope changed and more edits were made at a later date? As Venture Beat's coverage of Deepdub's 2022 funding round admits, the ramifications of using voices generated "from working actors' performances" for deep fakes are "murky". “Protection of a performer’s digital self is a critical issue for SAG-AFTRA and our members,” Screen Actors Guild national executive director Duncan Crabtree-Ireland told The Hollywood Reporter. “It is crucial that performers control the exploitation of their digital self, that any use is only with fully informed consent, and that performers are fairly compensated for such use.”

It's ethically dicey to play with someone's digital identity. Manipulation is often done without consent and introduces opportunities for the spread of misinformation. Audience members who feel misled or manipulated lose trust in producers.

Problem two: Uncanny Valley

Hours of painstaking work, plus plenty of archive material of the actor or voice in question, are required to create a realistic deep fake. Yet audiences aren’t entirely convinced by the results. Gizmodo staff reporter Andrew Liszewski confesses, “there’s still something off with these TrueSync processed clips. They don’t look as natural as the original performances do”. Edits to Fall changed only the occasional word, with AI lip adjustments on screen for a maximum of a few seconds. It remains to be seen how realistic shots would look if the AI clips were longer.

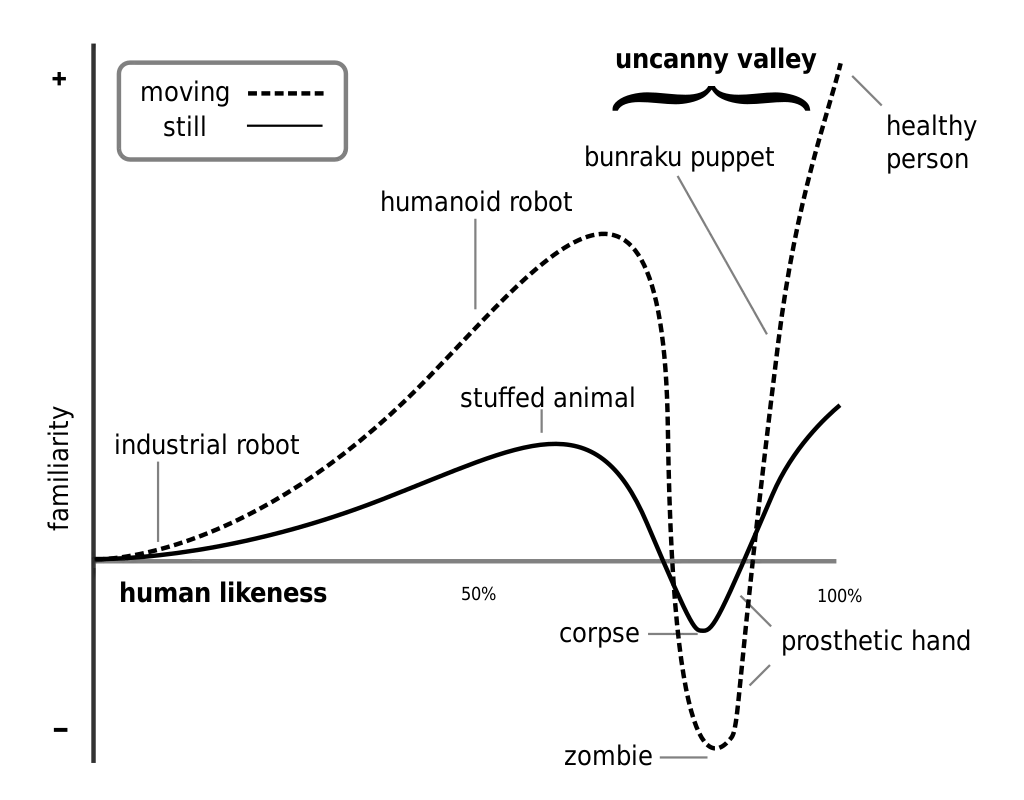

Liszewski questions the overall endeavor, asking if this deep fake approach “is more or less distracting than the options we have now for making a film accessible to a wider audience”. A lot can be learned by remembering the cautionary tale of The Polar Express – an animated film so determined to seem realistic that it hit the uncanny valley.

At Papercup, we offer a plethora of synthetic voice actors for this reason. Our voices sound human and have authentic tonal inflections, but we avoid trying to replicate the actual Bob Ross.

Problem three: Audience trust

There’s little scope for trust issues when it's just ‘f-bombs’ being censored to ‘freaking’. However, other, more concerning, deep fakes are emerging. Satirical deep fakes, including several of Elon musk and one testing Meta’s fake video policy, are giving way to concerning attempts at deception, such as a video circulated in March 2022 allegedly depicting Ukrainian president Zelenskyy calling for Ukrainian surrender in the ongoing war.

Working with deep fake technology comes with a high level of responsibility, and, in an era of misinformation and with the constant specter of fake news, audiences are uneasy at the prospect of being fooled. It's important to consider the emotional effect on viewers who feel tricked or deceived: there's a real risk of alienating those you attempt to entertain. Deep fake technology is only exciting to viewers when they are let in on what is 'real' or 'fake'. Otherwise, it becomes yet another breach of trust.

Summary

The changes to something like Fall are harmless enough - the actors gave consent, the broad meaning of the dialogue and scenes remains unchanged, and the director owns the technology in question. The project reveals how deep fake-style AI can be used for good.

However, it’s easy to overlook the specific circumstances that simplified the ethics of this particular project. Given the lack of regulation, those taking deep fakes further are operating in murky territory. Other uses should be considered carefully, and ensuring audience trust should be a top priority when parsing the ongoing ethical issues.

Join our monthly newsletter

Stay up to date with the latest news and updates.